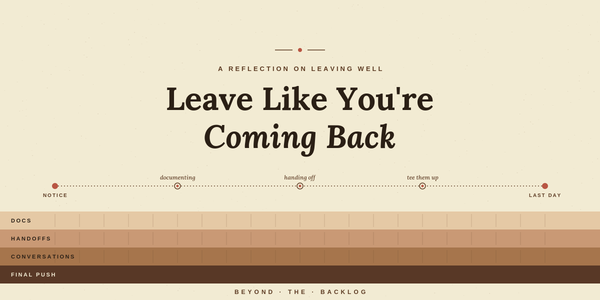

Leave Like You're Coming Back

Last week I wrote about what 4.5 years at Ontra taught me. This is the companion piece, about deciding to leave and how to leave well once you do. The decision to leave was one I went back and forth on a lot. I had seen Ontra through much